Anthropic's Ingenious Product Strategy May Not Be What it Seems

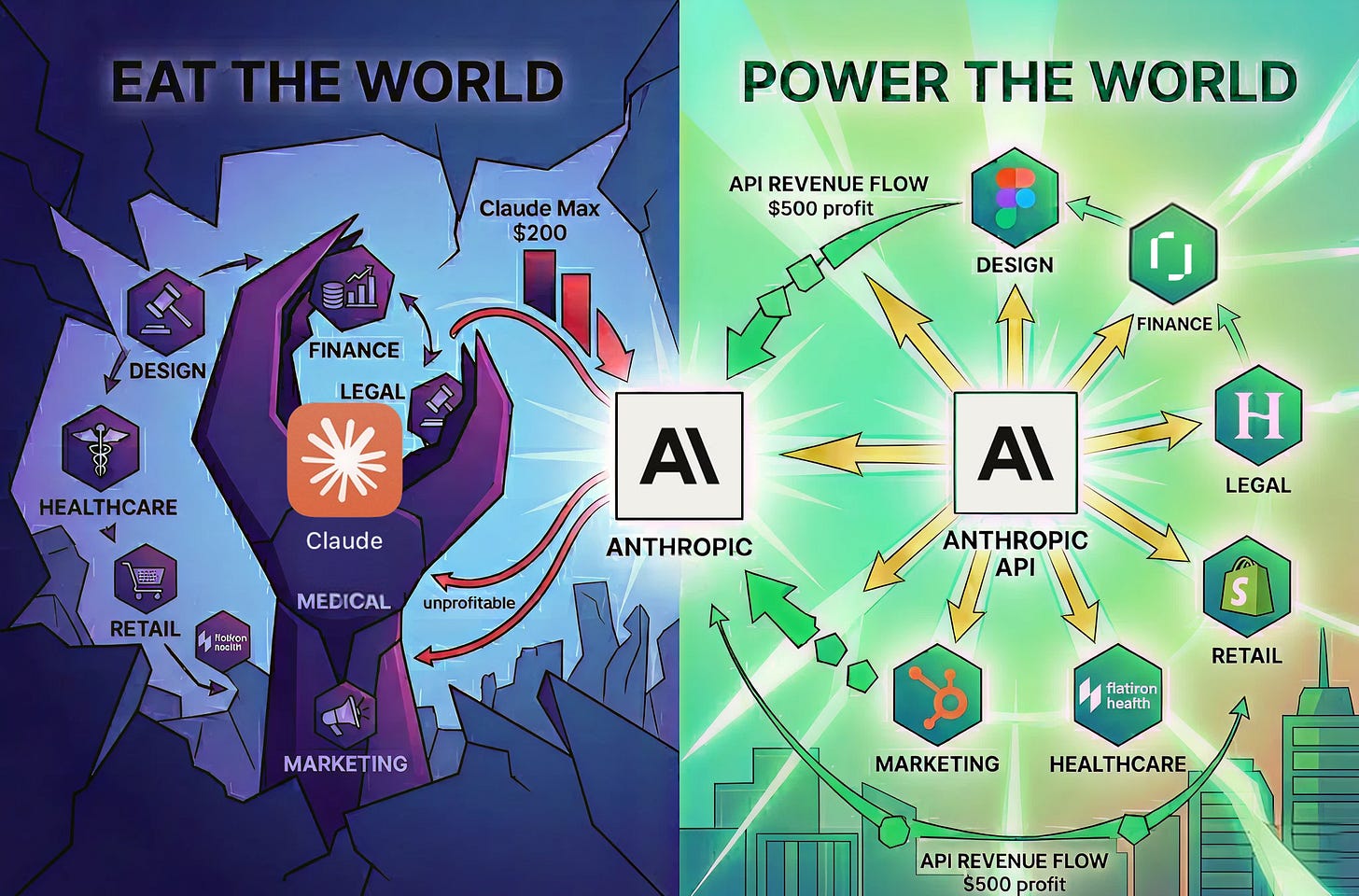

Claude may look like it's trying to eat the world, but the real strategy may be far more astute and interesting.

Anthropic just shipped updated finance and legal skills for Claude, meant to encode workflows built for the daily grind of bankers, research analysts, lawyers, paralegals, and more.

The market did what it does when Anthropic ships into a new vertical. Stocks of incumbents wobbled. Headlines asked whether the next generation of finance tools would be “Sherlocked“ by a model provider. Every vertical AI company within range—Figma for design, Rogo for banking, Harvey for legal, Glean for enterprise search, to name a few—likely fielded nervous emails from investors about how they will adapt, and suffered countless LinkedIn and Twitter analyses on how their businesses are “cooked”.

On the surface, it might appear like Anthropic’s goal is to get everyone to use Claude for everything. But I think this gets the strategy backwards. I suspect that this tactic of shocking the market is very intentional, but that the point is not to replace SaaS companies with Claude plugins. I don’t even think Anthropic expects, or wants, most consumers to seriously adopt Claude for everything.

Let’s examine why, and what they’re trying to do instead.

Anthropic’s Business

At its core, the business of an AI lab like Anthropic is to build the best models, and then charge more for their use than it costs to serve them. Every other product move, from direct-to-consumer agents like Claude.ai, built-in capabilities and skills, app marketplace, flows from that one equation. The interesting question isn’t whether Anthropic can absorb the app layer. It’s which revenue path actually lets them honor that core bet.

To that end, the point of releasing industry-specific skills is to raise the floor and to scare other companies into building AI-native products as fast as possible. Anthropic raises the floor on what a generic AI assistant can do. Every app-layer company looks at the new bar and realizes they need to clear it by a foot, not an inch, to keep justifying their pricing. That fear is the feature, not the bug.

The math on a single user

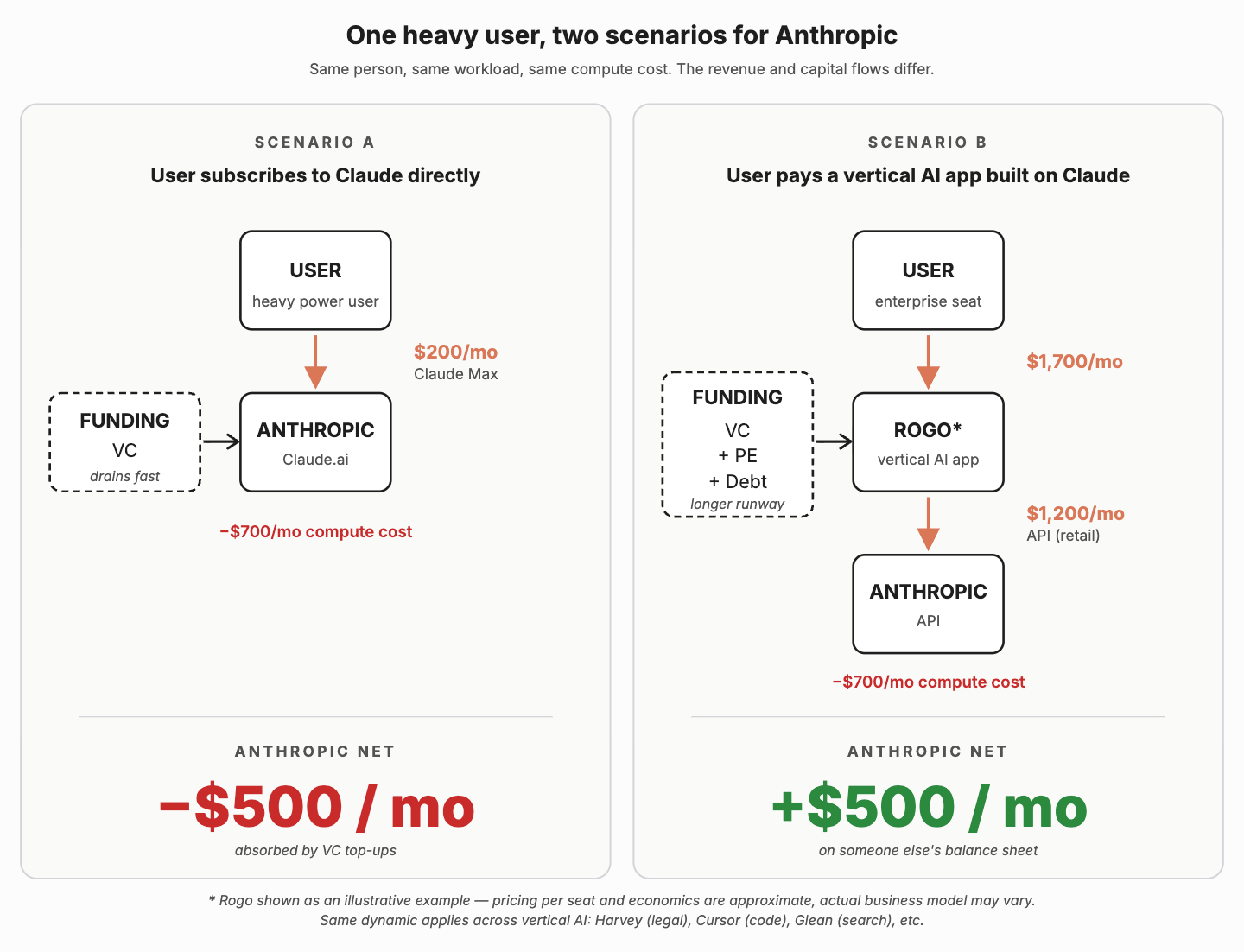

Take one investment banker as a worked example. To make this an apples-to-apples comparison, assume the same banker doing the same work, generating the same compute load to Anthropic. Let’s call it ~$700/mo in computing costs to serve the user.

In the direct world, the banker subscribes to Claude Max at $200/mo. Anthropic earns $200 against $700 of compute. Net: −$500/mo. Anthropic has effectively conceded this publicly: the top end of Max is gross-margin negative. They subsidize heavy users because that’s where the brand, the data, and the product loop live.

In the indirect world, the same banker uses a product like Rogo, an AI agent purpose-built for investment banking, which reportedly charges around $20,000 per seat per year. That’s roughly $1,700/mo. Rogo serves the banker by calling Anthropic’s API at retail rates, and unlike Claude Max’s flat fee, the API meter runs proportionally to actual usage. For deep-AI products like this, a substantial share of revenue flows through to inference. Let’s say it’s ~$1,200/mo of API spend per Rogo seat. Anthropic earns $1,200 against the same $700 in compute. Net: +$500/mo of profit for Anthropic on that user.

Note: I don’t actually have hard numbers on Rogo’s pricing model, and companies like this also often charge for incremental compute costs beyond the limit. This example is purely illustrative.

The detail here is that consumers are generally averse to paying for things based on usage. They are used to paying monthly subscription rates and knowing exactly what the cost is. Businesses, on the other hand, are much more amenable to paying for usage or outcomes of products, and they are willing to pay a lot more if they can compare a product’s output to the cost of hiring additional people. Businesses, unlike consumers, often have the benefit of large sums of V.C. equity funding or private equity debt, propping them up. That’s a lot of capital up for grabs.

This isn’t unique to finance, of course. Harvey runs the same model in legal. Cursor in coding. Glean in enterprise search. And pre-AI companies like Figma are aggressively adapting their businesses to this new paradigm. Every vertical AI company is charging multiples of what a direct Claude subscription would, with inference flowing back to the model provider underneath at metered rates.

And this is key because different industries have different appetites for usage and spend. If you want to serve lawyers (who historically bill by the hour), bankers (who make their money on deal fees), designers, doctors, etc. with the same $200 per month Claude subscription, you’re going to leave a lot of money on the table. But vertical software companies understand their markets deeply, can figure out their own pricing and product strategies, and can focus on optimizing their own revenue and gross margin profiles. This allows Anthropic to truly maximize earning potential across industries without losing to deadweight loss.

The math at a corporate level

There’s a capital story underneath this too. Anthropic absorbs the consumer product loss out of its own VC funding, a single bucket that needs constant top-ups. The app layer absorbs the indirect-path risk out of its own capital stack: VC, growth equity, and increasingly private debt. That deeper pool is what gives a Rogo or a Harvey the runway to pay Anthropic at full API retail per seat. From Anthropic’s perspective, every vertical AI company is a separate fundraise that ultimately feeds the API line item.

Every frontier lab has figured this out

Every major model lab has independently arrived at the same conclusion: consumer AI is brutal to run profitably. OpenAI launched ChatGPT as a consumer hit and has spent the last two years quietly pivoting toward the enterprise. While they’re known for ChatGPT, their consumer product, the bulk of the actual margin potential comes from their business and enterprise tiers, their API platform, and their custom-model deals with large customers. And that’s where they’ve ramped go-to-market spend to compete head-to-head with Anthropic. ChatGPT the consumer product is the brand, the funnel, and the data engine. It is not the be-all, end-all business.

A few years ago, Sam Altman went on a podcast and said that if OpenAI couldn’t figure out a sustainable consumer model, ads were the option of last resort. Reports this year suggest ChatGPT is now actively building ads infrastructure. That’s the kind of move you make when subscription revenue can’t cover your compute bill.

Google has the same problem but can subsidize Gemini with cash flow from search and cloud. Meta has the same problem and gives Llama away on purpose, because commoditizing the model layer protects its app business from the closed labs.

The big AI model labs have decided it’s more of a sustainable business to focus on being the infrastructure, not the consumer product. Let someone else figure out how to charge a banker $20K a year. Take the API spend.

The real audience for the vertical skills isn’t users

When Anthropic ships finance skills, the move looks competitive. It is. But the real target isn’t the end users who try them. It’s the engineering teams at the companies building on top of Claude.

It’s important to recognize that AI compute is becoming increasingly commoditized, and AI product companies often don’t pick a single model. They route across frontier models depending on the task. Anthropic doesn’t automatically capture 100% of any app’s compute. They have to earn it, every quarter, by being the best model for the workflows the app cares about.

That’s why the Skills releases matter. The finance skills aren’t just a demo for bankers. They’re a signal to the engineering teams at every company building vertical AI: Claude understands your domain. Long-context handling tuned for 10-Ks. Tool-use chains optimized for DCF walks. File parsing for pitch books. Every workflow-specific capability is a recruitment pitch aimed at the developers deciding which model to route a query to.

The same launch has two audiences. End users see a competitor. Engineers see a sales pitch.

That’s the flywheel. Anthropic’s product team raises the bar on what AI in a given vertical looks like. The app-layer company has to clear that bar meaningfully. To do that, they spend more on whichever model gets them there. The model best positioned to be that choice is the one shipping the most workflow-specific capabilities. Anthropic’s pitch is that that model is theirs.

The opportunity for vertical AI products

For app-layer companies, the takeaway is almost the opposite of what the stock market reaction suggests. Anthropic shipping into your vertical is, weirdly, an endorsement of the vertical and a forcing function to build better products, faster. And while it may seem like Anthropic’s skills aim to make them obsolete, Anthropic’s incentives are not to do so. So they’re likely to only go so far into verticals, leaving room to build a more tailored, complete product set for customers. That means there is still a ton of room to win. The challenge is doing so profitably. The companies that read these launches as existential threats and freeze are the ones that will get absorbed. The companies that read them as a starting line and sprint past Anthropic’s generic version are the ones that will thrive.

As for Anthropic, the strategy is brilliant. They’re not trying to win every workflow themselves. They’re using their own product to set the pace, scare the app layer into shipping faster, and to recruit the engineering teams choosing which model to bet on. They let the rest of the market spend their VC funding doing what Anthropic doesn’t want to do: building vertical products, figuring out how to price for every industry, hiring sales teams, owning customer support. Every one of those products routes dollars back to Anthropic’s API at the bottom of the stack.

Every dollar of vertical AI spend, regardless of who collects it from the end user, routes to one of a few large AI compute providers. The strategy for the labs is to maximize their share of a seemingly infinite TAM.

And that’s a much bigger business than selling subscriptions.